A tried and tested success formula for lazy journalism is the build-up and tear-down pattern.

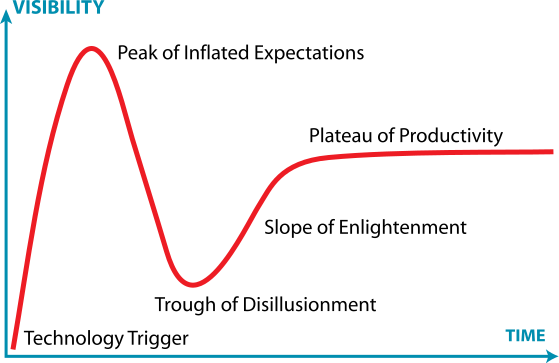

The hype comes before the fall. In the context of information technology, Gartner copyrighted the aptly named term “hype cycle”. Every technology starts out in obscurity, but some take off stellarly, promising the moon but, with the notable exception of the Apollo program, falling a bit short of that. Subsequently, disillusionment sets in, sometimes going as far as vilification of the technology/product. Eventually sentiments hit rock bottom, and a more balanced and realistic view is adopted as the technology is mainstreamed.

Even Web technology followed this pattern to some extent, and this was clearly mirrored by the dot com stock bubble. At the height of the exuberance, the web was credited with ushering in a New Economy that would unleash unprecedented productivity growth. By now it has, of course, vastly improved our lives and economies, it just didn’t happen quite as rapidly and profitably as the market originally anticipated.

D‑Wave’s technology will inevitably be subjected to the same roller coaster ride. When you make it to the cover of Time magazine, the spring for the tear down reaction has been set, waiting for the trigger. The latter came in the form of the testing performed by Mathias Troyer et al. While all of this is as expected in the field of journalism, I was a bit surprised to see that one of the sites I link to in my blogroll followed this pattern as well. When D‑Wave was widely shunned by academia, Robert R.Tucci wrote quite positively about them, but now seems to have given up all hope in their architecture in reaction to this one single paper. He makes the typical case against investing into D-Wave that I’ve seen many times argued by academics vested in the gate model of quantum computing.

The field came to prominence due to the theoretically clearly established potential of gate based quantum computers to outperform classical machines. And it was Shor’s algorithm that captured the public’s imaginations (and the NSA’s attention). Widely considered to be a NP-intermediate problem Shor’s algorithm could clearly crack our encryption schemes if we had gate based QC with thousands of qubits. Unfortunately, this is still sci-fi, and so the best that has been accomplished so far was the factorization of 21 based on this architecture. The quantum speed-up would be there if we had the hardware, but alas at this point it is the embodiment of something that is purely academic with no practical relevance whatsoever.

There is little doubt in my mind that a useful gate based quantum computer will be eventually built, just like, for instance, a space elevator. In both cases it is not a matter of ‘if’ but just a matter of ‘when’.

I’d wager we won’t see either within the next ten years.

Incidentally, it has been reported that a space elevator was considered as a Google Lab’s project, but subsequently thrown out as it requires too many fundamental technological breakthroughs in order to make it a reality. On the other hand, Google snapped up a D-Wave machine.

So is this just a case of acquiring trophy hardware, as some critics on Scott’s blog contended? I.e. nothing more than a marketing gimmick? Or have they been snookered? Maybe they, too, have a gambling addiction problem, like D-Wave investors as imagined on the qbnets blog?

Of course none of this is the case. It just makes business sense. And this is readily apparent as soon as you let go of the obsession over quantum speed-up.

Let’s just imagine for a second that there was a company with a computing technology that was neither transistor nor semiconductor based. Let’s further assume that within ten years they managed to rapidly mature this technology so that it caught up to current CPUs in terms of raw performance, and that this was all done with chip structures that are magnitudes larger than what current conventional hardware needs to deploy. Also this new technology does not suffer from loss currents introduced via accidental quantum tunneling, but is actually designed around this effect and utilizes it. Imagine that they did all this with a fraction of the R&D sums spend on conventional chip architectures, and since the power consumption scaling is radically different from current computers, putting another chip into the box will hardly double the energy consumed by the system.

A technology like this would almost be like the kind that IBM just announced to focus their research on, trying to find a way to the post-silicon future.

So our ‘hypothetical company’ sounds pretty impressive, doesn’t it? You’d think that a company like Google that has enormous computational needs would be very interested in test driving an early prototype of such a technology. And since all of the above applies to D‑Wave this is indeed exactly what Google is doing.

Quantum speed-up is simply an added bonus. To thrive, D‑Wave only needs to provide a practical performance advantage per KWh. The $10M up-front cost, on the other hand, is a non-issue. The machines are currently assembled like cars before the advent of the Ford Model T. Most of the effort goes into the cooling apparatus and interface with the chip, and there clearly will be plenty of opportunity to bring down manufacturing cost once production is revved up.

The chip itself can be mass-produced using adapted and refined Lithographic processes borrowed from the semi-conductor industry; hence the cost basis for a wafer of D‑Wave chips will not be that different from the chip in your Laptop.

Just recently, D‑Wave’s CEO mentioned an IPO for the first time in a public talk (h/t Rolf D). Chances are, the early D-Wave investors will be laughing at the naysayers all the way to the bank long before a gate based quantum computer factors 42.

What is the power consumption of a D-wave system compared to a regular computer?

bettinman: About 15.5 kW, including all peripheral equipment upfront. This will remain constant, even with increasing chip size(i.e in its number of qubits). The average power consumption of the fastest ten supercomputers in the US is 4,100 kW, or about 265 times greater than D-Wave.

impressive

Canada 1,

USA 0

http://qbnets.wordpress.com/2014/08/11/anything-for-you-luna/

Henning: Off topic, but very interesting nonetheless:

http://www.bbc.com/news/science-environment-28739373

Hi Henning: Off-topic again, but very interesting for cryptography:

http://www.newscientist.com/article/dn26135-factorisation-factory-smashes-numbercracking-record.html#.VAYA8Gd0yAI

Is there evidence of the power consumption scaling that you describe?

How far do you think D-wave is from implementing a 32-bit adder?

To clarify, my guess for the power consumption of the D-wave computer is

(refrigerator cost) + (# bits) * (control cost / bit) * (1 + (extra refrigeration cost from waste heat)).

My speculation is that the first term is so large that the second term cannot be seen, but that the cost of the second term per operation is far higher than it is on classical computers. I don’t have much evidence for this, so would love to see some experiments supporting the viewpoint you advance here.

I would advise your mother in law to stick to index funds.

Not sure how you justify the control cost/bit term in the context of adiabatic QC. I’d be surprised to see such a scaling.

As to my mother in law, she invests based on the impression she has of the management. E.g. she really like Elon Musk and hit a jackpot with Tesla stock and she really likes what she’s seen of Geordie 🙂

My estimate was based on the idea that the local magnetic fields require power to switch. I’m not expert on this and am hand-waving here. Also, the classical control circuitry seems to grow at least linearly with the number of qubits.

But you were the one who first said that energy costs had better scaling than silicon, and that this was part of the business case for D-Wave. What led you to that conclusion?

My understanding may be a bit naive, but to the extend that they actually implement adiabatic computing I’d only expect a heat signature to be generated when the result state is read out. Now the latter will certainly scale with the # of qubits, but in practice I expect this to be negligible in comparison to the base load of the fridge so that you wouldn’t start to see any effect until we get to some really high density chips.

Would certainly like to see some data on this as well.

I see that D-Wave’s website says “Power demand won’t increase as it scales to thousands of qubits” so I can understand why you would have been misled.

It is definitely crazy to imagine that power is only consumed when the result is read out. No current technology is remotely close to this ideal of reversible computing.

While it is true that the superconducting current involved does not encounter resistance, this is not the only thing necessary to run the computer. You will notice throughout the literature mention of “external fluxes”. These require power.

A quote from http://arxiv.org/abs/1004.1628

“External biases applied to the chip were provided by a custom-built 14-bit 128-channel differentially driven pro- grammable 50 MHz arbitrary wave form generator. The signals generated at room temperature were sent into the refrigerator…”

This sounds like it will require power too.

The reason the D-wave machine uses less power than a supercomputer is that has ~500 circuit elements and a supercomputer has billions. Each element of the D-wave machine almost certainly uses far more power, but there are fewer of them. The only way for it to be power-competitive is if there is a quantum speedup.

The reason the D-wave machine uses less power than a supercomputer is that has ~500 circuit elements and a supercomputer has billions.

Yes, obviously the absolute numbers matter very little at this point.

The only way for it to be power-competitive is if there is a quantum speedup.

Don’t think this can be decided without more data (and I don’t mean the speed-up but the power characteristics). To my knowledge none of the successive chips required fridge modifications, and as you state yourself, key is that they currently can compete with a modern conventional chip based on just a couple hundred qubits.

It seems quite obvious to me that their chips’ performance has been growing non-linear with their integration density. How else would they have caught up with conventional hardware? After all the chip release cycle is about the same in both cases.

So if on the other hand their power consumption grows linear (or close to linear) with the # qubits then no quantum speed-up is necessary to make this technology competitive (assuming they can continue on their past trajectory).

For a few hundred qubits to be competitive with a few billion bits, you would need quantum speedup. Those two points are (roughly) synonymous. I was responding to your statement that the case for D-wave makes sense when you “let go of the obsession over quantum speed-up”. Letting go of this obsession, as you suggest, would mean that matching a billion bits would require a billion qubits, and this would not have a power advantage.

The debate about whether there is a quantum speedup, or whether they have “caught up with conventional hardware”, is a different and much longer one. Presumably this is what you are saying when you say that the performance is growing nonlinearly. Most experts would say that this claim is speculative at best.

The debate about whether there is a quantum speedup, or whether they have “caught up with conventional hardware”, is a different and much longer one. Presumably this is what you are saying when you say that the performance is growing nonlinearly. Most experts would say that this claim is speculative at best..

What I am trying to say is really quite simple, and apparently I am not saying it very well.

The early D-Wave chips were no match for classical hardware. They then doubled their chips’ density pretty consistently every 15 months, which is slightly faster than Moore’s law. But not fast enough for them having caught up on this basis alone. So the annealing scheme they implement seems to gain more performance as the density increases. I.e. D-Wave receives more of a performance boost when doubling the qubits than you get when doubling the transistors on a conventional chip. From my point of view this observation shouldn’t be very controversial.

You are now saying that D-Wave’s chips exhibit quantum speedups. This is in fact controversial among experts. But leaving that aside for now, the point of your blog post was that quantum speedup was not necessary for D-wave’s devices to be valuable. This is the point I’m arguing against.

Let me just recap briefly. The apparent lack of increased energy usage is because of the large constant term. It’s like saying that adding RAM to my laptop doesn’t increase its power use because the screen uses so much more power. Here RAM:qubits and screen:fridge. For it to be different, you need quantum speedup.

You are now saying that D-Wave’s chips exhibit quantum speedups.

Just to be clear on this, observing that their performance grows faster with rising integration density than classical chips implies to you that there must be a quantum speed-up?

Let me just recap briefly. The apparent lack of increased energy usage is because of the large constant term.

That’s entirely possible but without more data I don’t consider this is a foregone conclusion.

I’m now somewhat lost. “Rising integration density” just means more qubits per chip, right? The Chimera architecture hasn’t changed significantly, I don’t think.

The question is how the computational power of D-wave scales with the number of qubits N. I am 99% sure that the power costs can be modeled as a + b N, for some constants a,b, and that we are currently in the regime where a >> bN. For computational power, if you believe quantum speedup can be achieved by the D-wave architecture, then this grows more rapidly than c * N for any constant c. The presence of quantum speedup in the D-wave architecture is still an open question, both empirically and theoretically. Empirically, Alex Selby’s code (among others) is still much faster. Theoretically, no examples of speedup have been proved, even for a hypothetical noise-free version of the D-wave architecture. Counter-arguments can be made, but you should at least acknowledge that claiming a quantum speedup for D-wave is highly controversial.

I realize that the D-wave promotional materials present this an uncontroversial fact. You should understand that they are literally selling something, and that the science press is usually too credulous about these things.

I’m now somewhat lost. “Rising integration density” just means more qubits per chip, right?

Yes.

If you believe quantum speedup can be achieved by the D-wave architecture, then this grows more rapidly than c * N for any constant c.

This, to me, is the missing link in our conversation that resulted in the confusion.

In my mind a modest better than linear grows in performance from one D-Wave generation to the next wouldn’t necessary constitute hard evidence for quantum speed-up.

The Chimera architecture hasn’t changed significantly, I don’t think.

When last I spoke to Geordie he mentioned that they are constantly changing the details of the architecture even if the underlying Chimera architecture stays the same.

That’s why I wouldn’t rule out that D-Wave may simply take advantage of incremental smarter engineering of their hardware as they learn to control the fab process better, and that this may contribute to the faster than silicon performance gains. After all their current structures are gigantic in comparison to what’s in your laptop’s CPU.

To me it seems to be an established fact that they realized these faster performance gains, but I am not comfortable to speculate what enables this trend without having more data.

From my point of view, you argue in a somewhat backwards manner. To you any such gain would proof quantum speed-up, yet since research on the D-Wave One has not established such speed-up, you argue that D-Wave shouldn’t have been able to make better progress in terms of performance than classical hardware. The latter though is in contradiction to the fact that they manged to catch up to conventional chips within ten years.

Is there something ‘quantum’ to the magic that allowed them to play catch-up? Maybe, but in my blog post I argue that this doesn’t really matter as long as they can continue on this trajectory. That’s all it takes to make this commercially interesting.

I wouldn’t say they’ve “caught up” with conventional chips, or that they have established faster than linear scaling.

If you believe that they have and that 500 qubits are comparable to billions of bits, then you believe that D-wave is achieving a quantum speedup, and not just a “modest” one.

If you believe that they have and that 500 qubits are comparable to billions of bits, then you believe that D-wave is achieving a quantum speedup, and not just a “modest” one.

If I thought that was true I wouldn’t have conceded the bet I had with Mathias Troyer, and wouldn’t be short of four fine jugs of Maple Syrup 🙂

Look, I am really not quite sure what we are arguing about at this juncture.

The Troyer et al. paper shows that there is no evidence for quantum speed-up (yet?) in the D-Wave One, on the other hand it also shows that it performs as fast as a current multi-core CPU with a hand-optimized annealing code. And yes, the latter should have billions of transistors, so I fail to see your point.