If anybody in the wider IT community has heard about Quantum Computing, it’s usually in the context of how it may obliterate our current industry standard encryption (e.g. RSA). Probably Peter Shor didn’t realize that he was about to define the entire research field when he published his work on what is now the most famous (and notorious) quantum algorithm. But once it was reported that he uncovered a method that could potentially speed up RSA decryption, anxiety and angst spread the news far and wide.

Odds are, if it wasn’t for the IT media stir that this news caused, quantum information technology would still be mostly considered just another academic curiosity.

With the modest means of my blog, I have tried to create some awareness that quantum computing is much bigger than this, when pointing at use cases in enterprise IT and social web services, but admittedly these were general observations that did not descend to the level of implementation details. That’s why I was very pleased when coming across this paper describing how quantum computing can be leveraged to calculate Google’s page rank. The authors argue that for a realistic web graph topology, their approach can

… provide a polynomial quantum speedup. Moreover, the quantum PageRank state can be used in “q-sampling” protocols for testing properties of distributions, which require exponentially fewer measurements than all classical schemes …

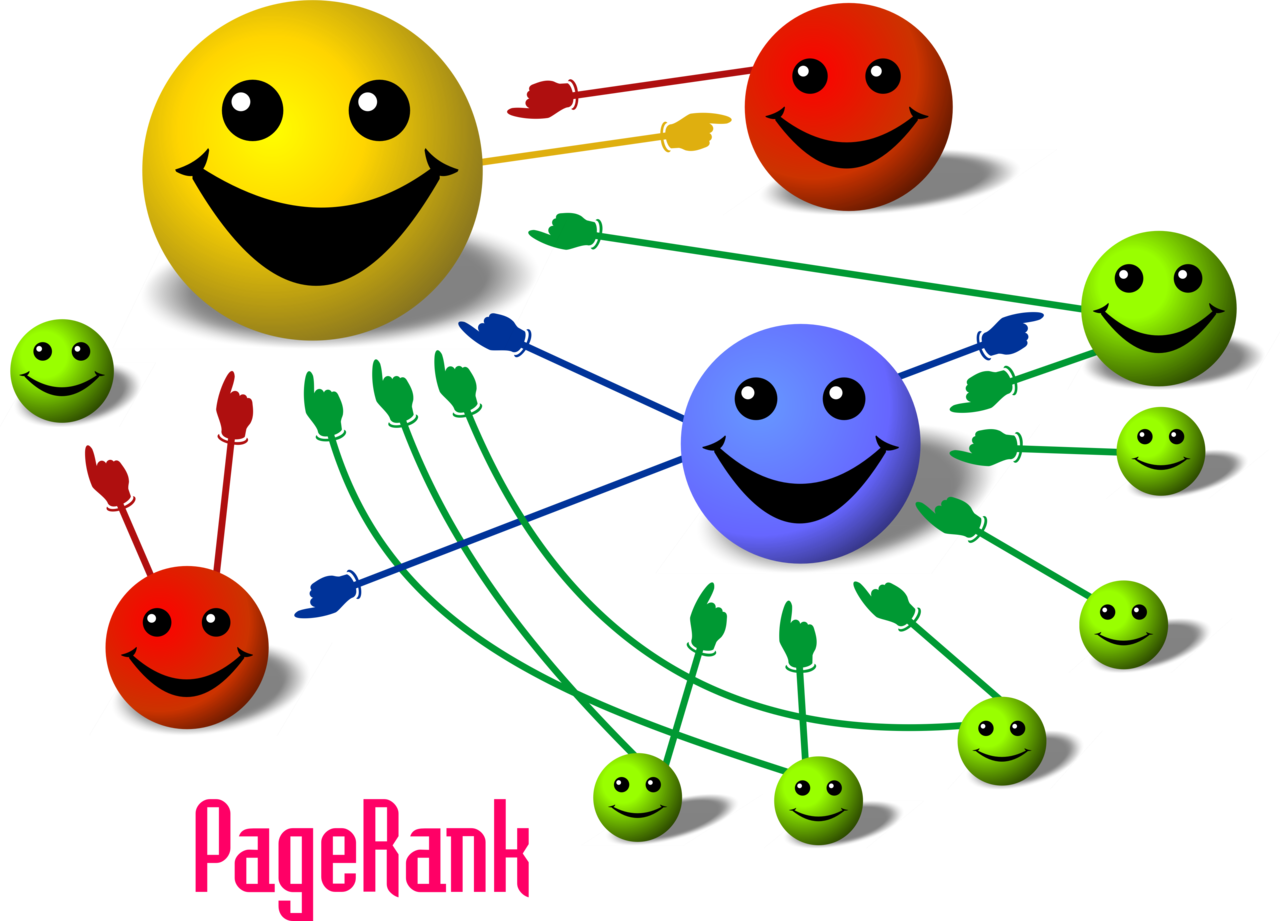

So why does this matter? Most bloggers will have heard about Google’s PageRank algorithm as it is crucial in determining how far up your site will appear in a Google search. But just as most people don’t really think about how power gets to your home’s outlet, few will have contemplated how this algorithm works and how much raw CPU power Google must spend to keep it updated.

For those inclined to learn more how these kinds of algorithms work, there is no better treat than this blog post on exactly this topic from the one and only Michael Nielsen (who’s standard tome of quantum computing is deservedly the first reference in the paper).

Google doesn’t advertise how often and how quickly they can run PageRank on the entire visible web space. Clearly, the more often a search engine can update the rank values the better. It is also a safe bet that executing this algorithm on a continuous basis will contribute to Google’s considerable power consumption. It is laudable that Google invests in “green” data centers but it seems they could save a lot on their energy bill if they’d get behind quantum computing. The “adiabatic” classification of this algorithm is a give-away. The unitary process evolution actually does not increase entropy, and thus does not require energy input for that part of the computation, unlike classical computing. That is why adiabatic quantum computing machines like the D-Wave One have the potential to drastically lower power consumption in data centers. It’s really a twofer: You get superior algorithms and save power.

Oh, and by the way, the PageRank for this blog is still 0 out of 10

Update: The way my post is written it may give the impression that the D-Wave One can execute this algorithm. But as this machine is a specialized QC device this is not necessarily the case. (This question was raised in the related LinkeIn discussion to this post that can be found here).

One thought on “Quantum Computing – So what is it good for? – Updated below”

Comments are closed.