Qubit spin states in diamond defects don’t last forever, but they can last outstandingly long even at room temperature (measured in microseconds which is a long time when it comes to computing).

Qubit spin states in diamond defects don’t last forever, but they can last outstandingly long even at room temperature (measured in microseconds which is a long time when it comes to computing).

So this is yet another interesting system added to the list of candidates for potential QC hardware.

Nevertheless, when it comes to the realization of scalable quantum computers, qubits decoherence time may very well be eclipsed by the importance of another time span: 20 years, the length at which patents are valid (in the US this can include software algorithms).

With D-Wave and Google leading the way, we may be getting there faster than most industry experts predicted. Certainly the odds are very high that it won’t take another two decades for useable universal QC machines to be built.

But how do we get to the point of bootstrapping a new quantum technology industry? DK Matai addressed this in a recent blog post, and identified five key questions, which I attempt to address below (I took the liberty of slightly abbreviating the questions, please check at the link for the unabridged version).

The challenges DK laid out will require much more than a blog post (or a LinkedIn comment that I recycled here), especially since his view is wider than only Quantum Information science. That is why the following thoughts are by no means comprehensive answers, and very much incomplete, but they may provide a starting point.

1. How do we prioritise the commercialisation of critical Quantum Technology 2.0 components, networks and entire systems both nationally and internationally?

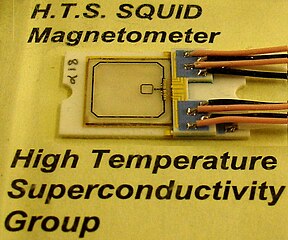

The prioritization should be based on the disruptive potential: Take quantum cryptography versus quantum computing for example. Quantum encryption could stamp out fraud that exploits some technical weaknesses, but it won’t address the more dominant social engineering deceptions. On the upside it will also facilitate iron clad cryptocurrencies. Yet, if Feynman’s vision of the universal quantum simulator comes to fruition, we will be able to tackle collective quantum dynamics that are computationally intractable with conventional computers. This encompasses everything from simulating high temperature superconductivity to complex (bio-)chemical dynamics. ETH’s Matthias Troyer gave an excellent overview over these killer-apps for quantum computing in his recent Google talk, I especially like his example of nitrogen fixation. Nature manages to accomplish this with minimal energy expenditure in some bacteria, but industrially we only have the century old Haber-Bosch process, which in modern plants still results in 1/2 ton of CO2 for each ton of NH3. If we could simulate and understand the chemical pathway that these bacteria follow we could eliminate one of the major industrial sources of carbon dioxide.

2. Which financial, technological, scientific, industrial and infrastructure partners are the ideal co-creators to invent, to innovate and to deploy new Quantum technologies on a medium to large scale around the world?

This will vary drastically by technology. To pick a basic example, a quantum clock per se is just a better clock, but put it into a Galileo/GPS satellite and the drastic improvement in timekeeping will immediately translate to a higher location triangulation accuracy, as well as allow for a better mapping of the earth’s gravitational field/mass distribution.

3. What is the process to prioritise investment, marketing and sales in Quantum Technologies to create the billion dollar “killer apps”?

As sketched out above, the real price to me is universal quantum computation/simulation. Considerable efforts have to go into building such machines, but that doesn’t mean that you cannot start to already develop software for them. Any coding for new quantum platforms, even if they are already here (as in the case of the D-Wave 2) will involve emulators on classical hardware, because you want to debug and proof your code before submitting it to the more expansive quantum resource. In my mind building such an environment in a collaborative fashion to showcase and develop quantum algorithms should be the first step. To me this appears feasible within an accelerated timescale (months rather than years). I think such an effort is critical to offset the closed sourced and tightly license controlled approach, that for instance Microsoft is following with its development of the LIQUi|> platform.

4. How do the government agencies, funding Quantum Tech 2.0 Research and Development in the hundreds of millions each year, see further light so that funding can be directed to specific commercial goals with specific commercial end users in mind?

This to me seems to be the biggest challenge. The amount of research papers produced in this field is enormous. Much of it is computational theory. While the theory has its merits, I think the governmental funding should try to emphasize programs that have a clearly defined agenda towards ambitious yet attainable goals. Research that will result in actual hardware and/or commercially applicable software implementations (e.g. the UCSB Martinis agenda). Yet, governments shouldn’t be in the position to pick a winning design, as was inadvertently done for fusion research where ITER’s funding requirements are now crowding out all other approaches. The latter is a template for how not to go about it.

5. How to create an International Quantum Tech 2.0 Super Exchange that brings together all the global centres of excellence, as well as all the relevant financiers, customers and commercial partners to create Quantum “Killer Apps”?

On a grassroots level I think open source initiatives (e.g. a LIQUiD alternative) could become catalysts to bring academic excellence centers and commercial players into alignment. This at least is my impression based on conversations with several people involved in the commercial and academic realm. On the other hand, as with any open source products, commercialization won’t be easy, yet this may be less of a concern in this emerging industry, as the IP will be in the quantum algorithms, and they will most likely be executed with quantum resources tied to a SaaS offering.

Like this:

Like Loading...